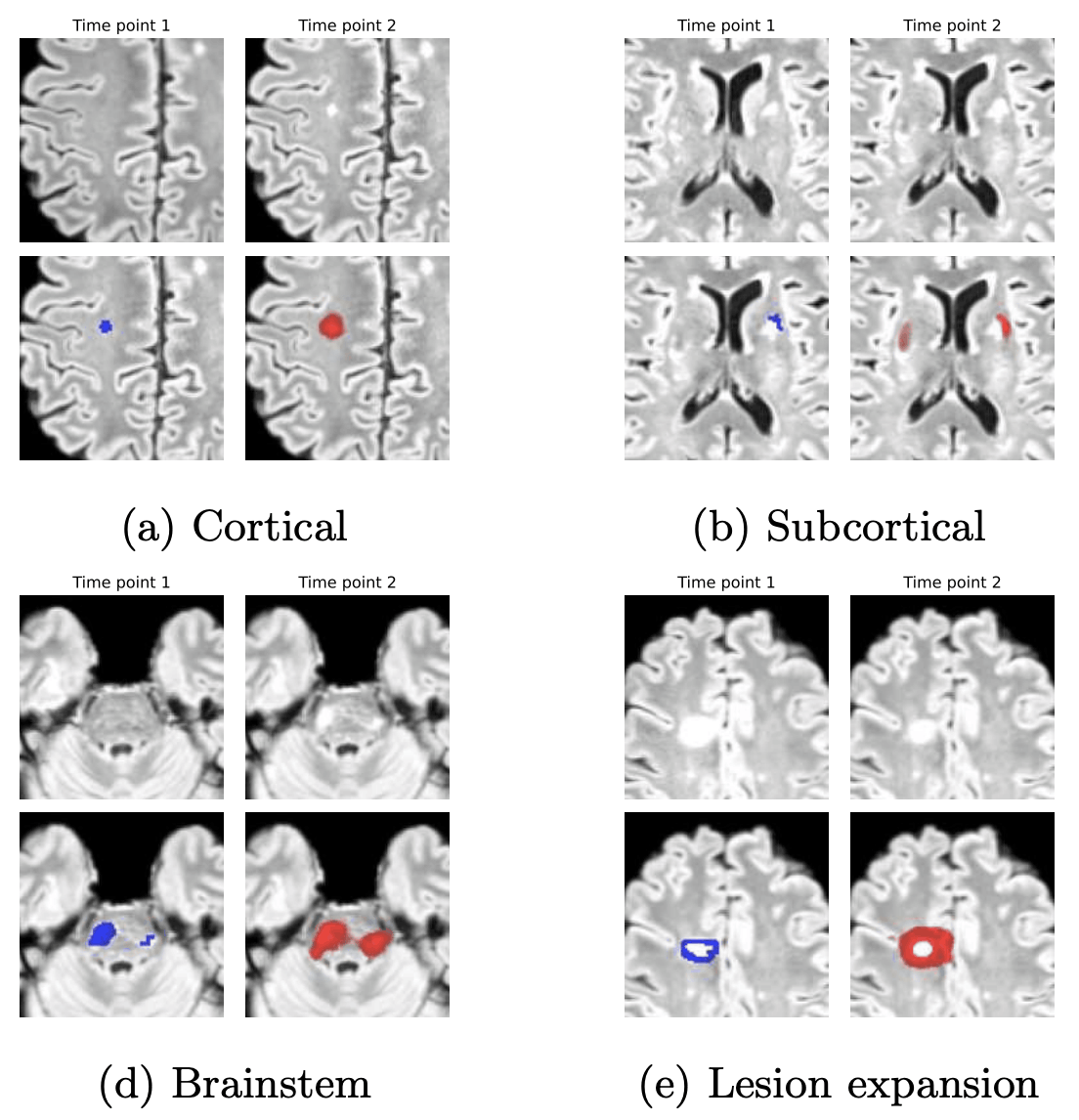

Self-supervised Lesion Change Detection and Localisation in Longitudinal Multiple Sclerosis Brain Imaging

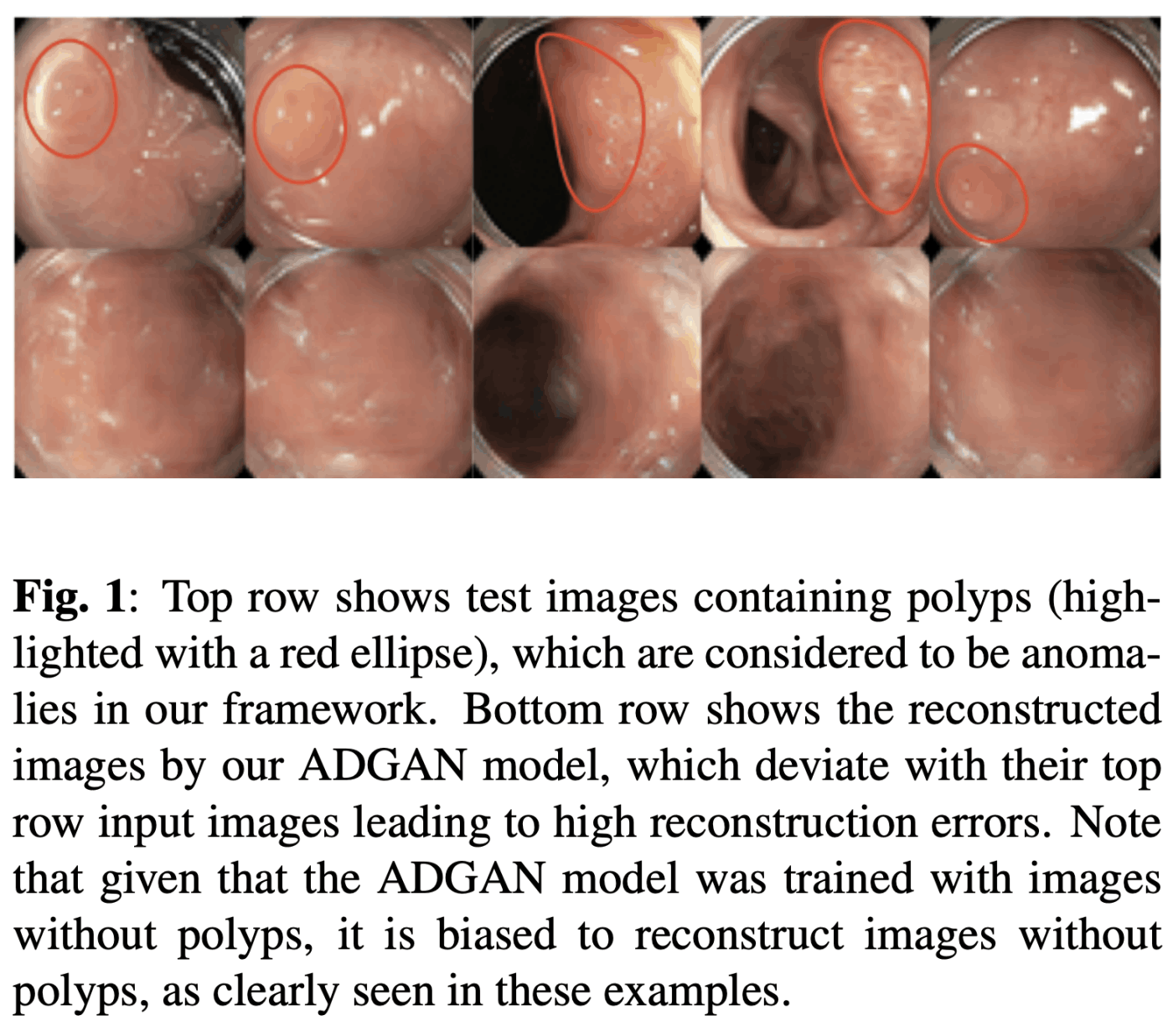

Longitudinal imaging forms an essential component in the management and follow-up of many medical conditions. The presence of lesion changes on serial imaging can have significant impact on clinical decision making, highlighting the important role for automated change detection. Lesion changes can represent anomalies in serial imaging, which implies a limited availability of annotations and a wide variety of possible changes that need to be considered. Hence, we introduce a new unsupervised anomaly detection and localisation method trained exclusively with serial images that do not contain any lesion changes.