Part-based modelling of compound scenes from images

2015, Jun–December

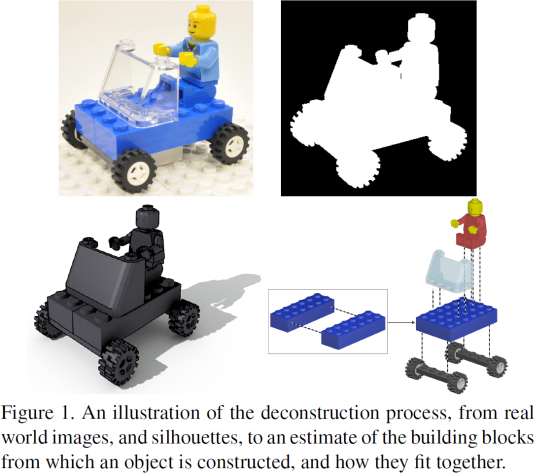

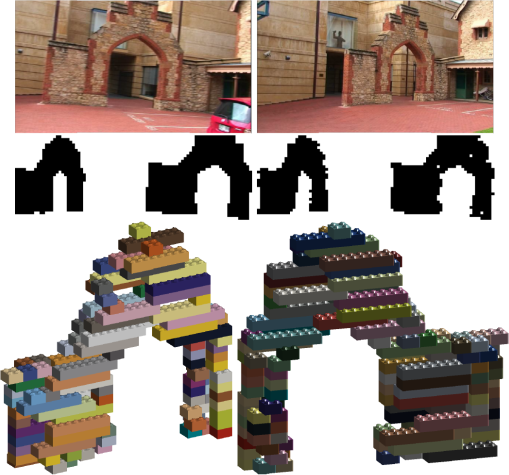

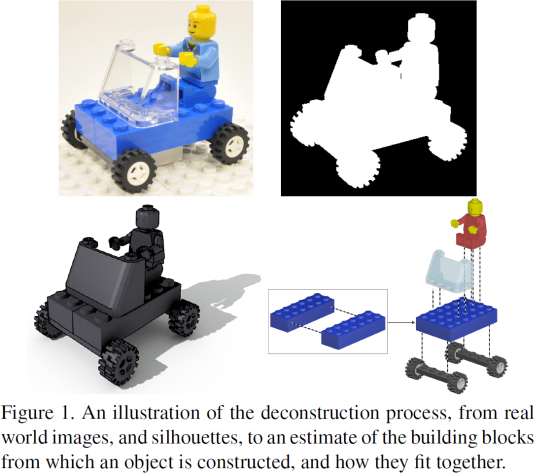

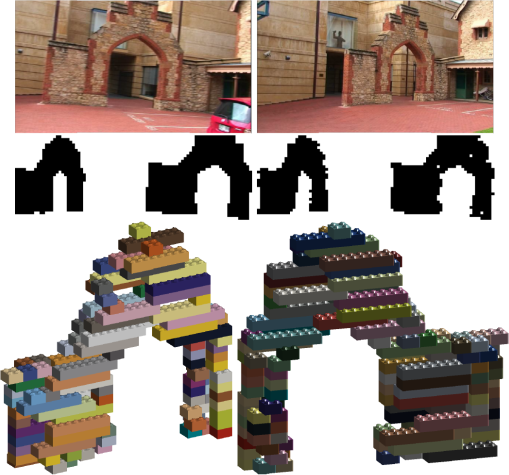

We are interested in the problem of estimating the structure of compound scenes from a set of images. Such scenes are prevalent in our everyday environment, and in many cases our knowledge of their innate structure is essential to our understanding of them. They include man made objects such as buildings, furniture, and cars, but also natural objects such as animals and plants. Our goal is to find the simplest construction which explains the shape of the scene, using a given library of parts. Unlike most work on the recovery of shape from images, our method does not generate a point cloud, or a volume, but a structural explanation of way the scene depicted is constructed. In this sense it is aligned with the blocks-world approach from the 1960s.

Much like the blocks-world approaches, our goal is to recover a semantic model of the structure of the scene. Instead of creating a simpler volumetric, or point cloud model of a scene, we wish to create a model which captures interdependencies between parts of a scene, and allows us to say “These are the wheels of the car so this is how it will move.” (fig. 1), or “This is how a wall might collapse in an accident, or a temple might collapse in an earthquake”.

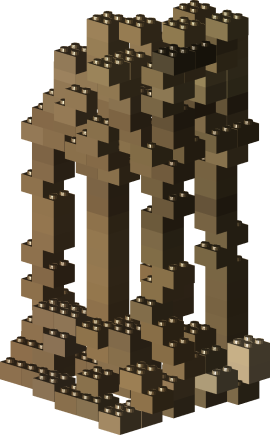

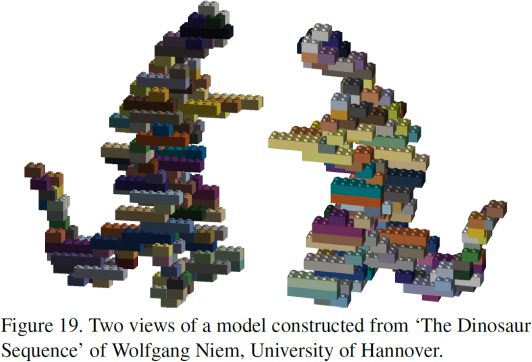

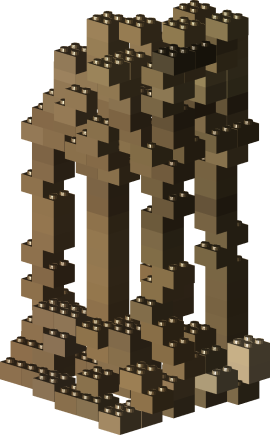

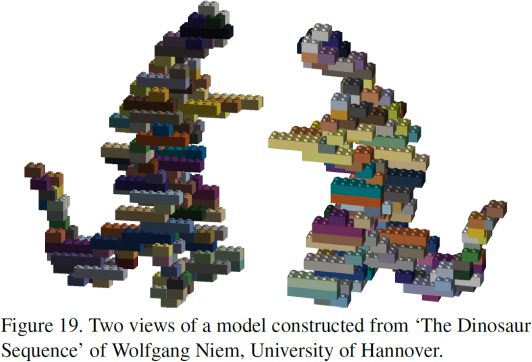

In this work, we focus on Lego block based reconstructions of both synthetic and natural scenes. We do this for two reasons: The use of Lego blocks emphasizes the tactile and interactive nature of our reconstructions, while the grid structure of Lego also provides a natural discrete parametrization of our pose space. It should be emphasized, however, that despite the grid structure of Lego, our method can be successfully applied to reconstructing natural structures that are not based on a grid.

The CVPR paper describing this work is available here, the Powerpoint for the talk is here, and is available as pdf here.