Star Tracking and Attitude Estimation

Our novel event-based star tracking technique, winner of

Best Paper Award Finalist at CVPR'19 Workshop on Event-based Vision and Smart Cameras.

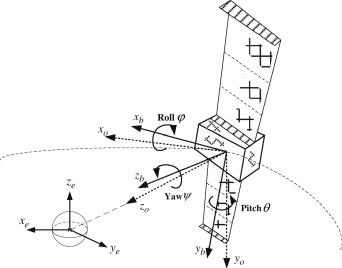

Spacecraft Attitude

The attitude of a spacecraft is the 3DOF orientation (roll, pitch, yaw) of its body frame with respect to an inertial frame, such as the celestial reference frame. Attitude control is a basic functionality in space flight. The subsystem for attitude control is called the Attitude Determination and Control System (ADCS). As the name suggests, there are two main components in an ADCS: estimating the current attitude, and executing a sequence of appropriate signals to the actuators (reaction wheels, thrusters, etc.) to achieve the desired body orientation.

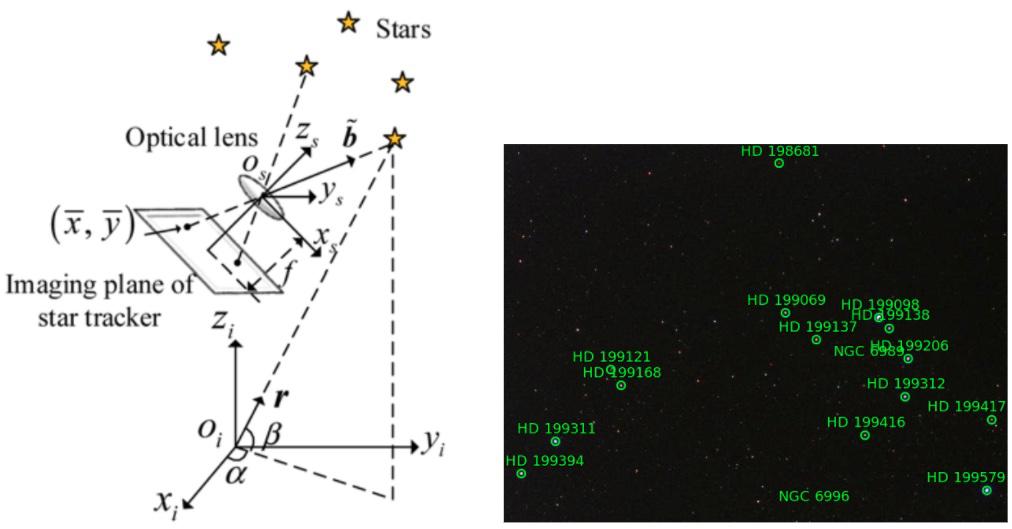

Star Trackers

Our work focusses on the attitude determination problem. A number of sensors are in use for estimating spacecraft attitude, such as sun sensors and magnetometers. It has been established, however, that star trackers are state-of-the-art in spacecraft attitude estimation, especially to support high precision orientation control. Star trackers are primarily optical devices that are used to estimate the attitude of a spacecraft by recognising and tracking star patterns. The figure below illustrates the geometry of star tracking.

Event Cameras for Star Tracking

Currently, most star trackers use conventional optical sensors. We propose the usage of event sensors for star tracking. Unlike a conventional optical sensor (CMOS, CCD), an event sensor detects intensity changes asynchronously. The output of an event camera is an stream {(x,y,t,p)}, where x are the 2D coordinates of an event on the image plane, t is the time of the event, and p is the polarity of the event (+ or -). The following figures illustrate the Davis 240C event camera from iniVation, and an event stream from observing a star field.

Why use event cameras for star tracking?

There are two potential benefits of using event cameras for star tracking. First, due to the pausity of the scene (relatively few bright spots against a black background), the number of events generated tends to be small relative to the number of pixel positions. Hence, an event camera may consume less power. Second, event sensors have high temporal resolution (e.g., iniVation Davis 240C has micro second resolution), which could enable higher-speed star tracking. This may be useful for ultra-fine attitude control.

Our Research

We have developed a technique for star tracking using event cameras. Our technique includes a novel event processing scheme to estimate attitude changes are high frequency from event streams. Results show that our method can achieve 1 degree RMSE in attitude estimation accuracy, which is on par with commercially available star trackers. Sample results are provided in the following.

Relevant Publications

- Tat-Jun Chin, Samya Bagchi, Anders Eriksson, Andre van Schaik. Star Tracking using an Event Camera. In CVPR Workshop on Event-based Vision and Smart Cameras, 2019. Best Paper Award Finalist. Arxiv preprint

- S. Bagchi and Tat-Jun Chin. Event-based Star Tracking via Multiresolution Progressive Hough Transforms. In Winter Conference on Applications of Computer Vision (WACV), 2020. Arxiv preprint

- Daqi Liu, Álvaro Parra, and Tat-Jun Chin. Globally optimal contrast maximisation for event-based motion estimation. In Computer Vision and Pattern Recognition (CVPR), 2020. Arxiv preprint, Source code

- Daqi Liu, Álvaro Parra, and Tat-Jun Chin. Spatiotemporal registration for event-based visual odometry. In Computer Vision and Pattern Recognition (CVPR), 2021. Arxiv preprint, Source code

Dataset

The dataset (event streams) used in the above paper, as well as ground truth attitudes, are available (download). If you use our dataset (or part thereof) in your work, please cite

- T.-J. Chin, S. Bagchi, A. P. Eriksson, A. van Schaik. Star Tracking using an Event Camera. CVPR Workshop on Event-based Vision and Smart Cameras, 2019.

@inproceedings{chin2019,

author = {T.-J. Chin, S. Bagchi, A. P. Eriksson, A. {van Schaik}},

title = {Star tracking using an event camera},

year = {2019},

booktitle = {CVPR Workshop on Event-based Vision and Smart Cameras}

}